Call it algorithmic media, not social media

One regular night in December, I was dumbly scrolling Instagram like so often when I noticed:

less than 5% of the posts in my feed were from people I know in real life

10%-15% were from accounts I follow

and the rest was divided between ads, and posts from accounts the algorithm predicted I will be interested in.

At that moment it hit me: there is little social in social media these days.

This reminded me of the TV ads channels I used to watch as a kid. During school holidays, I would turn on the TV and inevitably found one of these channels where commercials where broadcasted all day. Typically one commercial would take hours. Back then I found them fascinating. They would essentially repeat the same thing all over again and again until inevitably, at some point, I would start thinking: yes, I might need this. How have we made it so far without it? Luckily, I was underage and didn’t have a credit card back then.

I learned there is even a rule in marketing: you need to see something at least 7 times before you buy it.

Social media is the equivalent of the commercial channels of our age.

We voluntarily go there daily (or even several times per day) to be sold at, treated with ads, and be influenced to believe that we need, want or believe things we don’t.

That night by deleting the app from my phone I revoked my permission to be sold at. I don’t want to be the product of Instagram anymore.

Why we call them social

Platforms like Instagram, TikTok, Facebook earned the label social, because at first they were social: they connected you online to people you actually knew and opened information pathways between sources and audiences.

Early feeds were chronological and prioritized posts from your real-world connections. By doing this, they created a new public square online. To see posts from strangers, you had to follow or befriend them on the platform.

Second, as opposed to traditional media, these platforms offered (and still do) information flows both ways between the source and the destination of the information. Readers can react and reply to what they see in real time, and engage in a conversation both with each other and with the author of whatever they are engaging with. Before these platforms, consumption of news was one-sided.

But things have changed and I believe the label social in social media is misleading. Algorithmic media describes the status quo much better.

Algorithmic feeds

Feeds are no longer chronologic. They are algorithmic.

An algorithm decides what appears in the feed and in which order. It watches every move we make (pauses, scrolls, clicks, likes) and compares our behaviour to similar people in order to find patterns to predict what will hold our attention and what we will click on. Our behaviour has a much bigger influence than what we say, and also, nobody is asking. The goal of the algorithm is clear: to hold our attention for as long as humanly possible. It is not to inform us, or help us grow, or unite us. It is to keep us glued to the screen.

The mirage of unfiltered news

Early trust in these platforms came from a simple promise: direct access to people and voices outside institutional gatekeepers. Distrust in classical, mass media (often perceived as ideological, steered by the interests and agendas of the mighty) motivated many to join algorithmic media platforms searching for unfiltered voices, arguably without an agenda.

And so these platforms became the primary gateway to news, culture and opinion of many. The algorithm — something that has no civic values and whose only goal is to keep us watching — was entrusted with being the filter through which we learn what is happening not only with friends and family, but with news in general.

The irony is that we landed in the most filtered environment ever built.

Because algorithms are not neutral. The fact that they are free of humans in their operations, doesn’t make them neutral or unbiased. They also have an agenda.

When we are not the only actors

Also this ignores the fact that we humans are no longer the only actors on these platforms. A March 2025 study by Lynnette Hui Xian Ng and Kathleen M. Carley estimates that roughly 20% of chatter about global events on social platforms comes from bots. I can imagine this share to be much greater by now with ClaudeCode, OpenClaw and the likes rapidly gaining adoption.

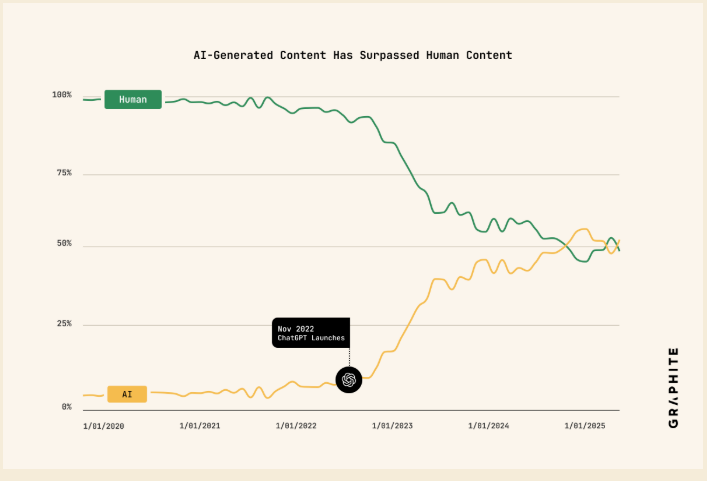

At the same time, much human-authored content is now mixed or produced by AI. Ahrefs reports that 74.2% of new pages contain at least “some AI‑generated content,” and Graphite finds that, by some measures, more articles are now created with AI than by humans. Those figures demand a caveat (“AI content” covers a spectrum from AI‑assisted drafts to fully generated pieces) but the trend is clear: the line between human voice and machine output is blurring.

Source: Graphite

This matters because we are susceptible of being influenced. And now we might not even know by whom.

Influencing public opinion is an old business, and has always been a dirty one. From ridging elections by buying votes or hiring people to spread opinions in a concerted manner, this business is as old as humanity.

But AI and now the recent tricks in the playbook (swarns of coordinates bots) change the game entirely. In their latest piece Daniel Thilo Schroeder & Jonas R. Kunst & Gary Marcus show that the new scale is massive, the cost extremely cheap and the sophistication high: today’s AI-powered swarms behave like coordinated social organisms. They mimic local language and tone, build credibility gradually, and adapt in real time. Plus, this is becoming an industrialized service available to buy: venture-backed platforms like Doublespeed now offer astroturfing1 as a service.

We went to these platforms looking for authentic, unfiltered content and we are getting synthetic, unverified takes filtered by an algorithm with an agenda that is not aligned with our values.

The consequences of our susceptibility to being influenced are very real as the example of the Rohingya genocide in 2016/2017 illustrates.

When a country trusts the algorithm

Myanmar is a mostly Buddhist country with a Muslim minority - the Rohingya, living mostly in the West, close to the Indian and Bangladeshi borders. For most history, Muslims and Buddhist have co-existed more or less peacefully, with occasional violence outbursts from the Buddhist majority. When the long military dictatorship with its strict censorship and repression ended in the 2010s, things didn’t improve for the Rohingya, they became worse. The Rohingya suffered sectarian violence and killings, many inspired and distributed via Facebook, which by 2016 was the main source of news for millions in Myanmar.2

Things started after a sectarian, Islamist muslim minority (ARSA) carried out attacks against buddhist population (killing and abducting several dozens of non-Muslims and assaulting army posts) aimed at establishing a separatist Muslim state.

The military exploited this by running anti-Rohingya propaganda through fake pages with harmless names like “Young Female Teachers” and “Let’s Laugh Casually”. The Facebook algorithm amplified their anti-Rohingya content because it generated engagement. This content spread views and opinions that made the killings in the street acceptable. Furthermore, back then, Facebook had only one Burmese-speaking moderator that was based in Dublin. One. So no one was watching.

This paved the ground for the army to be able to respond with “a full-scale ethnic cleansing campaign aimed against the entire Rohingya community” (Harari, p. 195-196). They destroyed hundreds of villages, killed between 7000 and 25000 unarmed civilians among many other atrocities. While the inflammatory anti-Rohingya messages were created by flesh and blood extremists and without AI, it was Facebook’s algorithm that decided which posts to promote. In fact, a UN fact-finding mission concluded in 2018 that by disseminating hate-filled content, Facebook had played a determining role in the ethnic cleansing campaign.

There is no such thing as neutrality

Many assume that because an algorithm is a machine, it must be neutral — free of ideology, free of agenda. It is not.

Every algorithm reflects the choices of the people who built it and the objective it was given. Facebook’s algorithm was given one objective: maximize engagement. Engagement, it turns out, is maximized by outrage, fear, and conflict. That is the agenda. That is the bias. And unlike a biased journalist or a compromised editor, you cannot name it, debate it, or hold it accountable. The filter is opaque, and the accountability diffuse, at best: today no single actor owns the consequences of choices made by optimization systems.

This why I believe that calling these platforms algorithmic media helps. It is a more accurate description of what these platforms are nowadays and is a way of breaking the spell: of making explicit the fact that they are not neutral, value-free artifacts. They have an agenda that is not aligned with mine, at least.

These platforms are the infomercial of our age, only that this time, the commercial knows exactly what you want, what you fear, and how many times you’ve already been exposed. The 7-times rule didn’t disappear. It got personalized, automated, and scaled. Calling these platforms social keeps the infomercial running. Calling them algorithmic is how you recognize the sell, ideally before the seventh time.

Back then, not having a credit card was my accidental protection. Deleting Instagram was the adult version: a deliberate choice to revoke permission. To say: I don’t want to be the product anymore. Some months later, the effects are real. I compare myself less to others. I’ve spent less money. I read more long-form content than before.

But I think this can only be the start. The problem at stake is who manages and who gets permission to filter and prioritize the news we consume and the information that enters our attention field. My unpopular take is that this is and should be again a human problem. We need to stop delegating what influences us to machines. We need to build our own filters and actively curate what we allow to enter our attention realm. We need to return to signals of credibility that go beyond the shallow likes and reach. And we need return to experiences mediated by our own direct experience and not only by experiences on a screen.

We gave away the filter. We can take it back, but first, lets call it what it is.

References

Harari, Y. N. (2024). Nexus: A brief history of information networks from the Stone Age to AI. Random House.

Law, R., Guan, X., & Soulo, T. (2025, May 19). 74% of new webpages include AI content (study of 900k pages). Ahrefs Blog. https://ahrefs.com/blog/what-percentage-of-new-content-is-ai-generated/

Miles, T. (2018, March 13). U.N. investigators cite Facebook role in Myanmar crisis. Reuters. https://www.reuters.com/article/world/un-investigators-cite-facebook-role-in-myanmar-crisis-idUSKCN1GO2Q4/

Ng, L.H.X., Carley, K.M. A global comparison of social media bot and human characteristics. Sci Rep 15, 10973 (2025). https://doi.org/10.1038/s41598-025-96372-1

Paredes, J. L., Smith, E., Druck, G., & Benson, B. (2025, October 23). More articles are now created by AI than humans. Graphite Five Percent. https://graphite.io/five-percent/more-articles-are-now-created-by-ai-than-humans

Schroeder, D. T., Cha, M., Baronchelli, A., Bostrom, N., Christakis, N. A., Garcia, D., Goldenberg, A., Kyrychenko, Y., Leyton-Brown, K., Lutz, N., Marcus, G., Menczer, F., Pennycook, G., Rand, D. G., Ressa, M., Schweitzer, F., Song, D., Summerfield, C., Tang, A., . . . Kunst, J. R. (2026). How malicious AI swarms can threaten democracy. Science, 391(6783), 354–357. https://doi.org/10.1126/science.adz1697

Astroturfing according to the Oxford dictionary “the deceptive practice of presenting an orchestrated marketing or public relations campaign in the guise of unsolicited comments from members of the public.”. Accessed 3rd March 2026

Astroturfing according to Wikipedia: “Astroturfing is the deceptive practice of hiding the sponsors of an orchestrated message or organization to make it appear as though it originates from, and is supported by, unsolicited grassroots participants.” Access 3rd March 2026

This section relies heavily on Harari’s account of the events in Myanmar. In particular p. 195 - p.197.